The AI Glossary: LLM and AI Terms Explained

As AI seeps into our work and personal lives, there are a lot of technical terms that are being thrown around without explanation. Some of the language is coming directly from AI researchers and developers, others are buzzwords that have quickly become tech favorites, and then there's simply marketing-invented words and product names that we have no choice but to accept.

For me, any time I come across unfamiliar AI vocabulary, I initially feel like I'm completely behind some big movement. Did I sleep on something? But then I realize I'm swimming in a super niche community who is doing what niche communities do - use acronyms and jargon that are efficient for insiders and also signal "one of us."

But the fact that these niche communities show up in my feeds, or in news stories, then it's usually a sign that something is brewing. So if you really do feel behind, or you want to get ahead of the curve and begin to understand the language of AI, I've put together this glossary as a start.

I've grouped the terms into categories so you can jump around. Use the search box to filter, or click a category to jump to it. If a term isn't here, it's probably because it's either I forgot or I'm still behind the times — this space moves fast. Let me know if you have suggestions to add to this glossary.

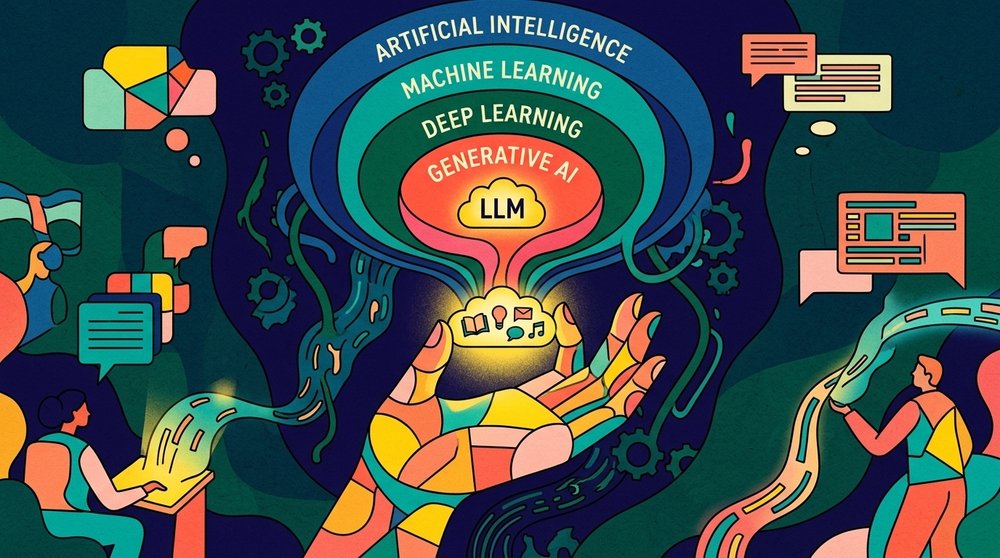

The Basics

You can skip this section if you're already fairly AI-fluent. But I don't want to assume everyone knows how AI fits in with DL, ML and GenAI and LLMs.

Artificial Intelligence (AI)

Artificial intelligence is a broad term for software that performs tasks we usually associate with human thinking — recognizing images, writing text, solving problems, making decisions. Modern AI is mostly about training machines on huge amounts of data rather than hand-coding rules.

Machine Learning (ML)

Machine learning is a branch of AI where software learns patterns from data instead of being explicitly programmed. If AI is the field, machine learning is the main technique used to get there today.

Deep Learning (DL)

Deep learning is a type of machine learning that uses neural networks with many layers. Most of the AI you use — ChatGPT, image generators, voice assistants — runs on deep learning under the hood.

Neural Network

A neural network is the type of software at the heart of every modern AI. It's loosely inspired by the brain — many small calculation units arranged in layers, where each unit takes in some numbers, does a small bit of math, and passes its result to the next layer. With enough layers and enough training data, the network can learn to recognize patterns in almost anything: words, images, sounds, you name it.

Generative AI (GenAI)

Generative AI is AI that creates new content — text, images, code, video, audio — rather than just classifying or predicting. ChatGPT, Midjourney, and Claude are all generative AI tools.

Large Language Model (LLM)

A large language model is an AI system trained on enormous amounts of text to predict the next word in a sequence. That simple objective turns out to produce something that can write, reason, translate, and code. ChatGPT, Claude, and Gemini are all LLMs.

Small Language Model (SLM)

A small language model is a stripped-down LLM that's small enough to run on a phone, laptop, or edge device. They trade raw power for speed, privacy, and the ability to work offline.

GPT (Generative Pre-trained Transformer)

GPT stands for Generative Pre-trained Transformer — the architecture and training approach behind OpenAI's models. "Generative" because it produces new text, "pre-trained" because it learns general patterns from massive datasets before any task-specific tuning, and "transformer" for the architecture underneath. The acronym started as a technical description and became OpenAI's brand (GPT-3, GPT-4, GPT-5), but the same underlying approach powers most modern LLMs.

Foundation Model

A foundation model is a large, general-purpose AI model trained on broad data — text, code, images — that can serve as the starting point for many different tasks. GPT-5, Claude Opus, and Gemini are foundation models. Most AI apps you use are built on top of one, with extra prompting or fine-tuning to specialize it for a narrower job.

Frontier Model

A frontier model is one of the current most-capable AI systems — the ones pushing the edge of what's possible. It's basically a research-and-marketing term for the state of the art.

SOTA (State of the Art)

A SOTA model is one that achieves the best known performance on a specific benchmark or task — "SOTA on MMLU," "SOTA on coding evals." More precise than frontier model, which is used loosely for any model at the cutting edge overall. A model can be SOTA on one benchmark without being a frontier model.

Transformer

The transformer is the underlying design — the blueprint — of nearly every modern LLM. It defines how the model is structured internally and how data flows through it as the model reads input and produces output. It was introduced in a 2017 Google paper called "Attention Is All You Need", and it's the reason modern AI got so good so fast.

Attention Is All You Need

"Attention Is All You Need" is the 2017 Google research paper that introduced the transformer architecture. It's arguably the most important AI paper of the past decade — every modern LLM, from GPT to Claude to Gemini, descends from the ideas in those eight pages. If you ever want to point to the exact moment the current AI era started, this is it.

How LLMs Actually Work

Token

A token is the basic unit an LLM reads and writes. It's usually a word, part of a word, or a character. The sentence "AI is wild" is about four tokens. When companies charge for API usage or talk about context windows, they're counting in tokens.

Tokenization

Tokenization is the process of breaking input text into tokens so the model can process it. Different models tokenize differently, which is one reason the same prompt behaves slightly differently across AI tools.

Parameters

Parameters are the internal numbers inside a model that were tuned during training. A "70 billion parameter" model has 70 billion of these knobs. More parameters generally means more capability, but also more compute to run.

Weights

Weights are another word for parameters — the specific values the model learned. When people talk about "open weights" models, they mean the trained model's numbers are released publicly so anyone can run it themselves.

Context Window

The context window is how much text (in tokens) an LLM can consider at once — both your input and its output. Claude 4.7 has a 1 million token context window, which is hundreds of pages. Run past the limit and the model forgets the start of the conversation.

Training

Training is the process of feeding a model huge amounts of data and adjusting its parameters until it gets good at predicting patterns. Training a frontier model costs tens or hundreds of millions of dollars in compute.

Pretraining

Pretraining is the first and most expensive phase of training, where the model learns general language patterns from massive datasets scraped from the internet, books, and code. Everything you've heard about "trained on the whole internet" refers to pretraining.

Post-training

Post-training is everything that happens after pretraining to shape the model's behavior — fine-tuning on curated data, teaching it to follow instructions, making it safer. It's where a raw language predictor becomes something useful like ChatGPT.

Embedding

An embedding is a way of turning a piece of text — or an image, or audio — into a list of numbers that represents its meaning. Texts with similar meanings end up with similar number lists, so a computer can find related content even when the exact words don't match. It's the trick behind search-by-meaning, recommendations, and most of how RAG works.

Vector

A vector is a list of numbers — and in AI, it's almost always an embedding. When people say they're "vectorizing" a document, they mean turning it into a list of numbers a computer can compare to other lists of numbers.

Vector Database

A vector database is a database optimized for storing and searching embeddings. It's the backbone of most RAG systems, because finding "the most similar" content at scale is a different problem from traditional database queries.

Attention

Attention is the trick inside a transformer that lets the model decide which earlier words matter most when predicting the next one. When you write a long prompt, attention is what helps the model connect "it" in the last sentence back to a noun mentioned three paragraphs earlier. It's the reason LLMs can keep track of long, complex inputs.

Inference

Inference is what happens when you actually use a trained model — you give it an input and it produces an output. Training is expensive but happens once; inference runs every time someone uses the model.

Training Data

Training data is the collection of text, images, code, or other content a model learns from during training. The quality and diversity of training data directly shape what a model is good at — and most frontier model makers won't disclose exactly what theirs was trained on.

Temperature

Temperature is a setting that controls how random an LLM's output is. Low temperature means predictable, repetitive answers. High temperature means more creative — and more unreliable — answers.

Mixture of Experts (MoE)

A Mixture of Experts model is split into many smaller models ("experts"), each trained to be good at a different kind of input — code, math, conversation, languages, and so on. When you send a prompt, the model routes each piece of it to just a couple of relevant experts instead of running the whole thing. The result: a model with the knowledge of a giant model that only uses a fraction of itself at any given moment, which makes it much faster and cheaper to run. DeepSeek, Mixtral, and Llama 4 all use this design. The opposite is a dense model.

Dense Model

A dense model is the traditional LLM design where every part of the model is used for every prompt — there's no routing or splitting like in a Mixture of Experts. Llama 3 and most older LLMs are dense. Simpler design, but slower and more expensive to run at the same total size.

KV Cache

The KV cache (short for key-value cache) is a chunk of memory an LLM keeps as it generates a response. Each new word it produces requires looking back at everything that came before it — the cache stores that work so the model doesn't have to redo it for every word. It's also why long conversations slowly use more memory and get a little slower the longer they go.

Tensor

A tensor is a grid of numbers — sometimes a flat list, sometimes a 2D table, sometimes a stack of tables, sometimes higher-dimensional. It's the basic data structure AI models work with: every input, every internal calculation, and every output is a tensor. When you hear "tensor cores" or "TPU," that's hardware built specifically to crunch tensor math very fast.

Getting More Out of Them

Prompt

A prompt is the input you give an AI to get a response. "Write me a poem about sourdough" is a prompt. The whole discipline of prompt engineering sprung up because how you phrase things matters a lot.

Prompt Engineering

Prompt engineering is the practice of crafting prompts to get better, more accurate, or more specific outputs from an LLM. It started as a buzzword-worthy skill and is now just part of working with AI.

System Prompt

A system prompt is a hidden instruction set that shapes how an AI behaves before any user message. It's the "you are a helpful assistant" layer sitting behind every chatbot, including rules, tone, and constraints.

Zero-shot Prompting

Zero-shot prompting is when you ask a model to do a task without giving it any examples. Modern LLMs are good enough that zero-shot usually works just fine.

Few-shot Prompting

Few-shot prompting is when you include a few examples of the task inside your prompt before asking the model to do it. Useful for formatting or style tasks where showing beats telling.

Chain of Thought (CoT)

Chain of thought is a prompting pattern where the model is asked to reason step-by-step before answering. It dramatically improves performance on math and logic problems, and it's now baked into reasoning models by default.

Reasoning Model

A reasoning model is an LLM trained to "think" before answering, producing hidden chains of thought that improve accuracy on hard problems. They're slower and more expensive to run, but much better at math, coding, and logic.

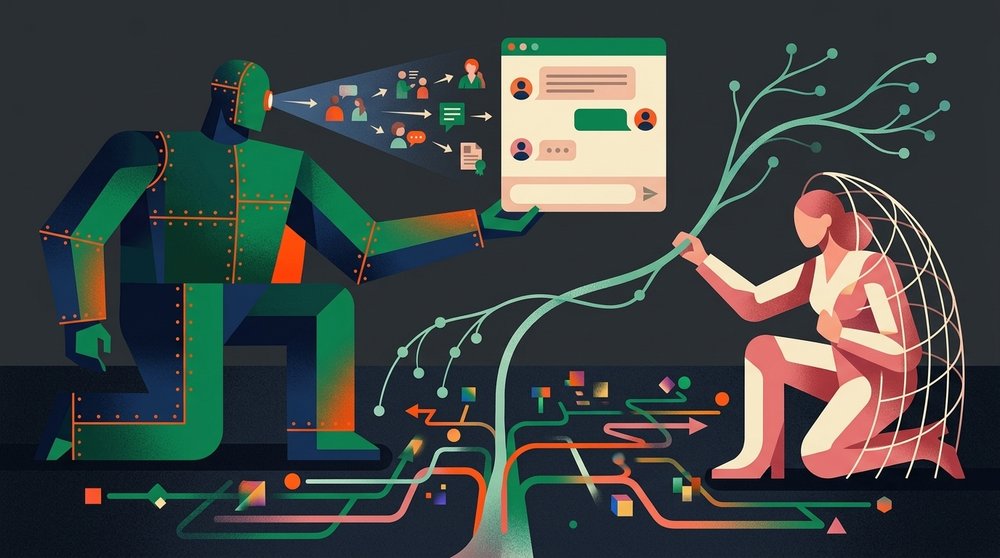

RAG (Retrieval-Augmented Generation)

RAG is a technique that combines an LLM with a search step. When you ask a question, the system first retrieves relevant documents from a database, then feeds them to the model along with your question. This is how AI tools stay current or answer questions about your private data.

Chunking

Chunking is the process of breaking large documents into smaller pieces before feeding them into a RAG pipeline. How you chunk — by paragraph, by topic, by token count — has a huge impact on how well the system finds the right material later. Chunk too small and you lose context; chunk too big and the search gets fuzzy.

Retrieval

Retrieval is the "R" in RAG — the step where the system pulls relevant content from a database to feed the model. It can match by meaning (using embeddings), by exact keywords, or by a combination of both.

Reranker

A reranker is a second pass in a retrieval pipeline that takes a batch of candidate results and reorders them by actual relevance. Rerankers are usually small, purpose-built models — a cheap way to improve RAG quality a lot.

Context Engineering

Context engineering is the evolving discipline of shaping everything an AI sees before it responds — system prompt, retrieved documents, prior conversation, tool outputs, and more. It's the more serious descendant of "prompt engineering," reflecting the reality that modern agents consume a lot more than a single user prompt.

Fine-tuning

Fine-tuning is taking a pretrained model and training it further on a smaller, specialized dataset. It's how you get a model that really knows your company's voice, your legal domain, or your customer data.

RLHF (Reinforcement Learning from Human Feedback)

RLHF is a training technique where humans rate the model's outputs, and those ratings are used to nudge the model toward better behavior. It's a big reason modern LLMs are polite, helpful, and mostly refuse to help you do sketchy things.

Distillation

Distillation is the process of training a small model to mimic a big one. You get something faster and cheaper to run, with a lot of the original's capability.

Quantization

Quantization is a compression technique that shrinks a model by storing its weights as smaller, less precise numbers. Imagine the model originally remembered the value 0.7184635 and the quantized version rounds it to 0.72 — the file shrinks dramatically, and the model still works almost as well. Quantized models are smaller and faster, and slightly less accurate (often not enough to notice). If you're browsing local model files you'll see labels like Q4_K_M or Q8_0 — those are quantization levels. Lower numbers mean smaller and faster; higher numbers mean closer to the original quality.

Modern AI Tools and Interfaces

Agent

An AI agent is an LLM that can take actions — browse the web, call APIs, run code, update files — not just generate text. Agents loop: take an action, see the result, decide what to do next.

Agentic AI

Agentic AI is the broader trend of giving LLMs more autonomy to complete multi-step tasks on their own. It's the difference between a model that answers questions and one that actually gets stuff done.

Tool Use (Function Calling)

Tool use, also called function calling, is when an LLM can invoke external tools — a calculator, a search engine, a code runner — to produce better answers. It's the foundation every AI agent is built on.

MCP (Model Context Protocol)

MCP is an open protocol introduced by Anthropic that standardizes how AI agents connect to external tools and data sources. Think of it like USB for AI — instead of every tool needing a custom integration, MCP gives them a common plug.

Multimodal

A multimodal model can handle more than one type of input or output — text, images, audio, video. GPT-5, Gemini, and Claude 4.7 are all multimodal.

Vision Model

A vision model is an AI that can interpret images. Most modern LLMs have vision baked in, which is why you can paste a screenshot into ChatGPT and have a conversation about it.

Computer Use

Computer use is a capability where an AI agent can control a computer the way a human would — clicking, typing, scrolling, navigating apps. For a real-world example, see my post on Claude in Chrome.

Claude Code

Claude Code is Anthropic's command-line tool for working with Claude on software projects. It runs in your terminal, reads and edits files, executes commands, and can be extended with Skills and MCP servers. It's one of the main tools behind the current vibe coding wave.

Cursor

Cursor is an AI-first code editor, forked from VS Code and rebuilt around AI assistance. Alongside Claude Code and GitHub Copilot, it's one of the most popular tools for AI-assisted development.

Skills

In the Claude ecosystem, Skills are reusable capability packs that extend what the AI can do. Each one bundles instructions, tools, and example workflows for a specific job — like reviewing code, summarizing a meeting, or running a security check. You invoke a skill by typing /skill-name (similar to a keyboard shortcut) and Claude switches into that mode for the task.

Diffusion Model

A diffusion model is the type of AI behind most image and video generators. It starts with a canvas of random pixel noise — like TV static — and gradually transforms it, step by step, into a coherent image that matches your prompt. Midjourney, DALL-E, and Stable Diffusion are all diffusion models.

Text-to-Image

Text-to-image is any AI that generates images from a written prompt. "A corgi astronaut surfing on Mars" becomes a picture.

Text-to-Video

Text-to-video is the same idea but for video. Tools like Sora and Runway can generate short clips from text descriptions, though quality and length are still limited.

Running AI Locally

Local LLM

A local LLM is a model that runs entirely on your own computer instead of calling a cloud API. Slower and less capable than the frontier models, but private, offline, and free to run once set up. The gap is closing faster than most people realize.

Hugging Face

Hugging Face is the GitHub of AI — the central hub where researchers and companies publish models, datasets, and demos. If you're downloading an open-weights model, you're almost certainly getting it from Hugging Face.

Ollama

Ollama is the easiest way for most people to run a local LLM. You install it, type ollama run llama3, and you have a chatbot running on your laptop. It handles downloads, quantization, and serving behind the scenes.

LM Studio

LM Studio is a desktop app for running local models with a graphical interface. Like Ollama, but click-based instead of command-line — better for people who don't live in a terminal.

llama.cpp

llama.cpp is the open-source engine that powers most local LLM tools, including Ollama and LM Studio. It's written in C++ and optimized to run large models on consumer hardware (CPUs, Apple Silicon, ordinary GPUs) instead of data-center chips.

GGUF

GGUF is the file format most local LLMs come in. It bundles the model's weights, metadata, and quantization into a single file that llama.cpp and friends can load. If you see a .gguf extension, that's a quantized model ready to run locally.

Safetensors

Safetensors is a file format for storing model weights that's safer and faster to load than the older .pt or .bin formats. Most models on Hugging Face are published as safetensors alongside or instead of GGUF.

VRAM

VRAM is the memory on your graphics card. It's the main bottleneck for running local LLMs — a model's size in GB needs to roughly fit in your VRAM for it to run fast. 8GB gets you small models; 24GB runs most quantized mid-sized models; 48GB+ starts approaching serious territory.

CUDA

CUDA is Nvidia's software platform for running general-purpose math on their GPUs. It's the reason Nvidia dominates AI — almost every training framework, inference engine, and local tool is built on CUDA first and other hardware second.

NPU (Neural Processing Unit)

An NPU is a chip designed specifically for AI workloads, built into modern laptops and phones alongside the CPU and GPU. Apple Silicon's Neural Engine and the "Copilot+ PC" chips are NPUs. They make small models run fast and efficiently without a dedicated graphics card.

MLX

MLX is Apple's machine learning framework, designed specifically for Apple Silicon (M-series Macs). It takes advantage of unified memory, which lets Macs run larger models than you'd expect for their price — a 128GB M-series Mac can load models that'd require a $10,000+ Nvidia rig.

Unsloth

Unsloth is a popular open-source library for fine-tuning LLMs much faster and with far less VRAM than the standard tools. If you're training a LoRA on a single GPU, there's a good chance you're using Unsloth under the hood.

LoRA (Low-Rank Adaptation)

LoRA is a fine-tuning shortcut. Instead of re-training a large model from scratch — which takes a data center — LoRA leaves the original model untouched and trains a small "patch" on top of it. The patch is a tiny file you can attach to or remove from the base model on demand. Popular for character or art-style add-ons in Stable Diffusion, and for cheap custom LLM tuning.

QLoRA

QLoRA is LoRA on a quantized base model. Same idea as LoRA, but even cheaper to train because the base is compressed. It's how hobbyists fine-tune models that would otherwise need a data center.

Inference Server

An inference server is software that hosts a model and answers requests — like a web server, but for AI. Ollama is an inference server. So are vLLM, Text Generation Inference (TGI), and the backend behind every cloud AI API.

Model Card

A model card is the documentation published alongside a model — what it was trained on, what it's good at, its limitations, known biases, license. Hugging Face pages are essentially model cards.

Benchmark

A benchmark is a standardized test used to measure and compare model capabilities — MMLU for general knowledge, HumanEval for coding, GPQA for science, and many more. When you see a new model claiming "state of the art," it's being measured against benchmarks like these.

Eval

An eval (short for evaluation) is a test you run against a model to measure how well it performs on a specific task — either a standard benchmark or a custom one you wrote for your own use case. "Write evals before you ship" has become a core practice for building AI products.

Popular Model Families

GPT

GPT is the model family from OpenAI that powers ChatGPT. Closed-source, API-only, and still the most recognized name in AI. GPT-5 is the current flagship.

Claude

Claude is Anthropic's model family, known for long context windows, strong reasoning, and a heavy focus on safety and alignment. Available through claude.ai, Claude Code, and the API.

Gemini

Gemini is Google DeepMind's flagship model, deeply integrated across Google's products — Search, Workspace, Android. Multimodal from the ground up.

Google DeepMind

DeepMind is the research lab behind Gemini. Originally a UK company Google acquired in 2014, it merged with Google Brain in 2023 to form Google DeepMind. Most of Google's AI research now happens under this banner.

Llama

Llama is Meta's open-weights model family. When Meta released Llama weights publicly in 2023, it effectively kickstarted the modern open-source AI movement. Most local LLM tooling exists because Llama did.

Mistral

Mistral is a French AI lab that ships both open-weight and closed models. Known for small-but-capable models and a European counterweight to the US-China AI duopoly.

DeepSeek

DeepSeek is a Chinese lab that shook the AI world in early 2025 by releasing high-performing reasoning models with open weights at a fraction of the usual training cost. Heavy users of Mixture of Experts.

Qwen

Qwen is Alibaba's open-weight model family, particularly strong at multilingual tasks and coding. Qwen models are among the most downloaded on Hugging Face.

Gemma

Gemma is Google's open-weight family — the smaller, freely-distributable cousin of Gemini. Designed to run locally and be fine-tuned easily.

Kimi

Kimi is the model family from Moonshot AI, a Chinese lab known for pushing extremely long context windows. Popular in Asia and increasingly visible globally.

Grok

Grok is xAI's model, built into X (formerly Twitter). Known for a less filtered tone and tight integration with real-time X data.

Phi

Phi is Microsoft's family of small language models, designed to punch above their weight. Good choices for running locally on laptops.

Image, Video, and Audio Tools

Stable Diffusion

Stable Diffusion is the open-weights image model that democratized AI art when it was released in 2022. It's still the foundation of most local image generation — every custom model, LoRA, and fine-tune in the scene descends from it.

Flux

Flux is an image model family from Black Forest Labs (founded by several of the original Stable Diffusion researchers). As of 2026 it's the leading open-weights image generator, especially for photorealism and text-in-image.

Midjourney

Midjourney is a closed image generation service, known for a distinct aesthetic quality that has made it a favorite among designers and marketers. Originally Discord-only, now has a proper web app.

DALL-E

DALL-E is OpenAI's image model. It lives inside ChatGPT, though the newer "GPT image" capability built into GPT-5 has largely replaced the standalone DALL-E experience.

Nano Banana

Nano Banana is the image generation model built into Google's Gemini. The name started as a leaked codename on LMArena, got adopted by users while testing it blind, and Google eventually embraced it as the public name. Known for strong character consistency and natural-language image editing.

ComfyUI

ComfyUI is a node-based visual interface for running local image and video generation models. Looks intimidating at first, but it's the power-user tool of choice — entire workflows get shared on Reddit and Civitai as single JSON files.

Civitai

Civitai is the community hub for sharing Stable Diffusion models, LoRAs, and generated images. Think of it as the Hugging Face of the local image gen world, with a more open posture toward NSFW and fan content.

Sora

Sora is OpenAI's text-to-video model. First shown in early 2024, it set the bar for what AI video could look like and kicked off the current video generation boom.

Veo

Veo is Google's text-to-video model. Available through Gemini and increasingly integrated into Google's creative products.

Kling

Kling is a Chinese video generation model (from Kuaishou), currently considered one of the most capable in the world for text-to-video and image-to-video. Often beats US competitors on motion quality.

Runway

Runway is a creative AI company that predates the current boom — originally a video editing tool, now a full video generation and editing suite. Their Gen-series models are industry favorites.

Higgsfield

Higgsfield is a video generation app focused on character motion and viral-style social media clips. Heavily popular on TikTok and Instagram for AI creators.

Suno

Suno is a text-to-music generator. Type a description, get a full song with vocals. Controversial among musicians, wildly popular with everyone else.

Udio

Udio is the other major text-to-music generator, often preferred for more controllable, higher-fidelity output. Suno and Udio are the two main players competing for the AI music crown.

ElevenLabs

ElevenLabs is the leading AI voice platform — text-to-speech, voice cloning, dubbing. If you've heard an uncannily human AI voice in the last year, it was probably ElevenLabs.

ASR (Automatic Speech Recognition)

ASR is AI that converts spoken audio into text. When you dictate into your phone, generate captions on a YouTube video, or transcribe a meeting, that's ASR running under the hood.

TTS (Text-to-Speech)

TTS is the opposite of ASR — AI that turns written text into spoken audio. Modern TTS systems like ElevenLabs are good enough that a casual listener often can't tell it's synthetic.

Whisper

Whisper is OpenAI's open-source speech recognition model, widely considered the best free ASR available. It powers a huge chunk of the transcription and voice-to-text tools you see today, often running locally.

The Downsides

Hallucination

A hallucination is when an AI states something false with full confidence. It's a fundamental quirk of how LLMs work — they don't "know" facts, they predict plausible-sounding tokens. RAG, tool use, and better training all reduce hallucinations but don't eliminate them.

AI Slop

AI slop is low-quality, mass-produced AI content created to occupy space rather than inform. It's the equivalent of pink-slime journalism, flooding search results, YouTube thumbnails, Amazon books, and social feeds.

Prompt Injection

Prompt injection is an attack where malicious instructions are hidden inside content the AI reads — a webpage, a document, an email — tricking the model into doing something its user didn't ask for. It's one of the hardest unsolved problems in AI security.

Jailbreak

A jailbreak is a prompt that tricks an AI into ignoring its safety guidelines. Jailbreaks and safety training exist in a constant cat-and-mouse cycle.

Bias

Bias in AI is when a model's outputs reflect biases in its training data — cultural, racial, gender, political, whatever. Every model trained on internet data has some baked in.

Alignment

Alignment is the research area focused on making sure AI does what we want, including in edge cases and over the long term. "Aligned" means the model behaves in line with human values and intentions.

Guardrails

Guardrails are the safety rules and filters built into an AI system — refusing certain requests, filtering harmful output, blocking sensitive topics. They're implemented through training, system prompts, and external checks.

The New SEO and AI Search World

SEO (Search Engine Optimization)

SEO is the traditional practice of optimizing content so it ranks in search engines like Google. Keywords, backlinks, headers, page speed, all the usual stuff. It's not going away, but it's no longer the whole picture.

GEO (Generative Engine Optimization)

GEO is the practice of optimizing content so AI tools — ChatGPT, Perplexity, Google's AI Overviews — cite and surface your site in their generated answers. Different game from SEO: you're optimizing for a summary, not a ranked list.

AEO (Answer Engine Optimization)

AEO is the practice of structuring content so it can be extracted as a direct answer by an AI system. Clear questions, clean headers, self-contained passages that can be lifted out and cited without losing context.

AIO (AI Optimization)

AIO is a catch-all term for optimizing for AI-powered search surfaces, especially Google's AI Overviews. Overlaps heavily with GEO and AEO depending on who's using the term.

LLMO (LLM Optimization)

LLMO is optimizing how LLMs understand and recall your brand, both in their training data and when they retrieve your pages live. Similar to GEO with more focus on entity and brand recognition over time.

AI Overview

An AI Overview is the AI-generated summary Google shows at the top of search results. It pulls from multiple sources and usually cites a few of them. Getting cited there is the new "ranking on the first page."

Share of Voice (SOV)

Share of voice, in AI search, is how often your brand is mentioned in AI responses compared to competitors for a given set of prompts. It's the AI-era equivalent of ranking position.

AI Visibility

AI visibility is a measure of how often and how prominently your brand appears in AI-generated answers across tools like ChatGPT, Perplexity, and Google AI Overviews.

Semantic Density

Semantic density is how much meaning is packed into a sentence or passage. AI systems favor high-density content because it gives them more signal per token when they're generating summaries.

Citation

A citation, in the AI search world, is when an AI response names or links to your site as a source. Citations are the new backlinks.

Culture, Buzzwords, and Edges

Vibe Coding

Vibe coding is building software by describing what you want to an AI and letting it write most of the code. The term was coined by Andrej Karpathy in early 2025 and has become shorthand for AI-assisted development in general. I wrote a whole post on whether it's the future.

AI Native

AI native describes products, companies, or people that were built with AI at the center from day one — not bolted on after the fact. It's the 2020s version of "digital native."

Copilot

Copilot is the general term for an AI assistant embedded inside another tool — GitHub Copilot for code, Microsoft Copilot for Office, Cursor for writing. The framing implies AI as helper, not replacement.

Chatbot

A chatbot is any conversational software interface. It used to mean scripted decision trees; now it mostly means an LLM behind a chat window.

Open Source / Open Weights

Open source AI means the model's code and often its weights are publicly available for anyone to use, modify, or self-host. Meta's Llama, Mistral, and DeepSeek are the best-known examples.

Closed Model

A closed model is one where the weights are private and you can only access it through an API — OpenAI's GPT, Anthropic's Claude, Google's Gemini. Most frontier models today are closed.

API (Application Programming Interface)

An API is how developers talk to a model programmatically — send a prompt, get a response, pay per token. Every major AI tool has one, and it's usually where the real integration work happens.

AGI (Artificial General Intelligence)

AGI is hypothetical AI that matches or exceeds human intelligence across essentially all tasks. It's the long-standing goal of the field and the source of most sci-fi anxiety about AI. Depending on whom you ask, it's five years away or impossible.

ASI (Artificial Superintelligence)

ASI is the step past AGI — AI that's dramatically smarter than humans at everything. Right now it's mostly a theoretical discussion, but it's a real topic in alignment research.

Turing Test

The Turing test is a 1950 thought experiment where a machine is considered "intelligent" if a human can't reliably tell they're talking to a machine. Modern LLMs routinely pass casual versions of it, which is why the test feels less meaningful than it used to.

Keeping Up

I'll update this as the vocabulary keeps growing — and it will. If there's a term you keep hearing that isn't here, or a definition you think I got wrong, let me know. A glossary is only useful if it keeps up with the language.

And remember, if you see people start throwing around new AI terms you've never heard of, chances are you're not the only one trying to keep up. Sometimes they're buzz words, sometimes they're marketing names that a company invented or a user coined, and sometimes they're genuinely technical terms that are traveling from niche research circles into mainstream conversation. Either way, it's always worth asking: "What does that actually mean?"